Why we don't let AI run the show in our research pipeline

There's a tempting shortcut when building AI-powered tools - just pipe everything through an LLM and call it done. Here's why we didn't, and what we learned building a hybrid pipeline for professional market research.

The tempting shortcut

There’s a tempting shortcut when building AI-powered tools: just pipe everything through an LLM and call it done. Feed in raw web content, ask the model to extract insights, ship it.

We tried that approach early on. It doesn’t work. At least not for professional market research.

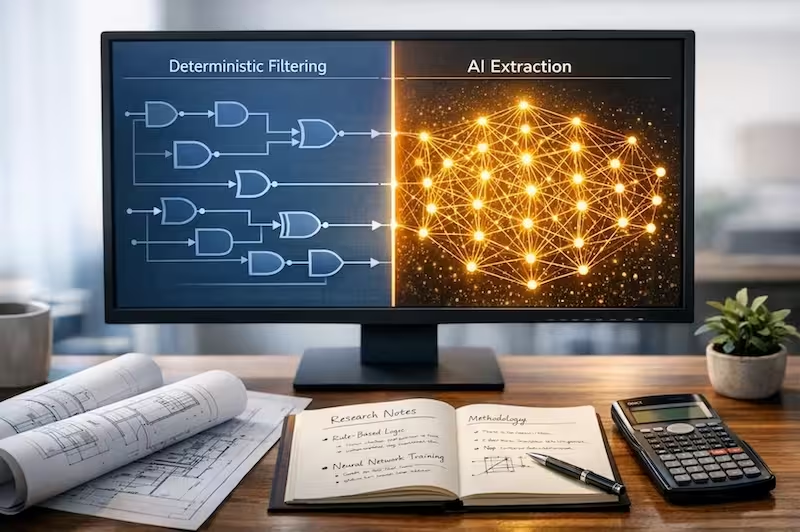

Here’s what we learned, and why we ended up with a hybrid pipeline that uses deterministic logic for the heavy lifting and AI only where it genuinely adds value.

The problem with “AI everything”

When you hand raw web content to an LLM and ask for insights, a few things happen consistently:

The model hallucinates structure that isn’t there. It finds patterns because you asked it to find patterns, not because they exist. It treats a 404 error page with the same weight as a detailed product review. And it has no way to tell you how confident it is in any of it. That gap is where tools like Mimir become relevant, continuously monitoring unprompted conversation across forums and communities so structured signal keeps flowing between your planned research cycles.

For a personal project, that’s fine. For a research agency putting findings in a client deck, it’s a serious problem. Research outputs need to be defensible. “The AI said so” is not a methodology.

What deterministic logic actually means

Before any content reaches our LLM, it goes through several filtering layers that use straightforward rules, not machine learning:

Does this URL look like a paginated index page or a sitemap? Skip it. Does this domain consistently produce off-topic content? Block it. Does this page have fewer than 50 words? Discard it. Does the text contain first-person language, opinion markers, and experience words? Keep it.

These checks are fast, cheap and predictable. And crucially, they are auditable. You can look at a piece of content that got filtered and understand exactly why. We go deeper on what makes a source worth keeping in the first place in what makes a good online source for qualitative research, and explore why this rules-first approach outperforms purely generative pipelines in why deterministic RAG beats generative AI for research.

No model weights. No probabilities. Just logic you can read and reason about.

Try the logic yourself

We’ve exposed a “lite” version of our gatekeeper logic below. Use the samples or paste your own research snippets to see how deterministic rules protect the pipeline from “expert noise” and low-value data before it ever reaches the AI.

LIVE TOOL

Where AI actually earns its place

Once the deterministic layer has done its job, we’re left with a much smaller set of genuinely relevant conversations. That’s where LLM extraction makes sense.

Identifying themes across hundreds of conversations, spotting nuance in how people describe a problem, generating a synthesised insight from a cluster of related opinions are tasks where human-like language understanding genuinely helps and where the cost and unpredictability of LLMs is worth it. If you’re weighing this kind of extraction against traditional social listening, social listening vs consumer research covers the tradeoffs in detail.

The ratio matters. In our pipeline, roughly 80% of the filtering work happens before the LLM ever sees the data.

flowchart TD

accTitle: AI research pipeline

accDescr: A flowchart showing raw web content passing through deterministic filtering including word count, first-person language, and domain rules, then into LLM insight extraction for themes, confidence scores and traceability

A["🌐 Raw web content

Forums · Reviews · Surveys · Social"] --> B

subgraph B[" 🔽 Deterministic filtering "]

direction TB

F1[Min. word count]

F2[First-person language]

F3[Domain allow / blocklist]

F1 & F2 & F3 --> F4[✅ Auditable logic]

end

B --> C["🧠 LLM insight extraction

Themes · Confidence · Traceability"] Why this matters for research rigour

Professional researchers are rightly sceptical of AI tools. They’ve seen the hallucinations, the confident-sounding nonsense, the outputs that look impressive until someone asks “but how did you get there?”

A hybrid approach gives you a defensible answer to that question. The rules-based filtering is transparent and consistent. The AI is doing interpretation, not data selection. Those are meaningfully different things.

We’re building a tool for researchers who need to stand behind their work. That shaped every architectural decision we made.

If you need market signal that keeps flowing between research projects, start for free.

You can see the approach in action at Citium Labs, the tools are free to try.