Dovetail vs Condens: which research repository will your team actually use?

Dovetail is the category leader because it is beautiful and flexible. It is also, for many teams, an insight graveyard with better design. The real question is not which tool stores research better. It is which one your team will still be using six months after implementation.

Which research workspace actually gets used?

There is a Slack message that every insights manager has either sent or received. It goes something like: “Does anyone know if we’ve already researched this?” Sometimes it comes with a follow-up: “I think someone did something on this last year but I can’t find it.” And then, usually, the team either starts from scratch or makes a decision without the prior work.

That message is not a search problem. It is a structural problem. And the tool you chose to solve it probably made it worse.

The diagnostic question nobody asks before buying

When research teams evaluate repositories, they tend to compare features: tagging systems, AI assistance, integrations, export formats. What they rarely compare is what happens to the tool eighteen months after rollout, when the initial energy has worn off and nobody is maintaining the taxonomy they agreed on in week one.

Dovetail and Condens are the two tools that serious research operations tend to land on. They make genuinely different bets about what the core problem is, and understanding that difference is more useful than any feature comparison.

What Dovetail gets right, and where it leaves you

Dovetail became the category leader by making qualitative research feel less like administrative work. Its interface is clean, its assisted tagging is genuinely useful, and its flexibility means a team can adapt it to fit almost any workflow. For a solo researcher or a small team with a consistent process, it is hard to fault.

The assisted tagging deserves specific credit. When you are working through interview transcripts, Dovetail’s AI suggestions meaningfully reduce the time spent on initial coding. It surfaces candidate tags based on existing content, which helps when you are building on a taxonomy that already has shape. The reduction in manual effort is real at the level of individual projects.

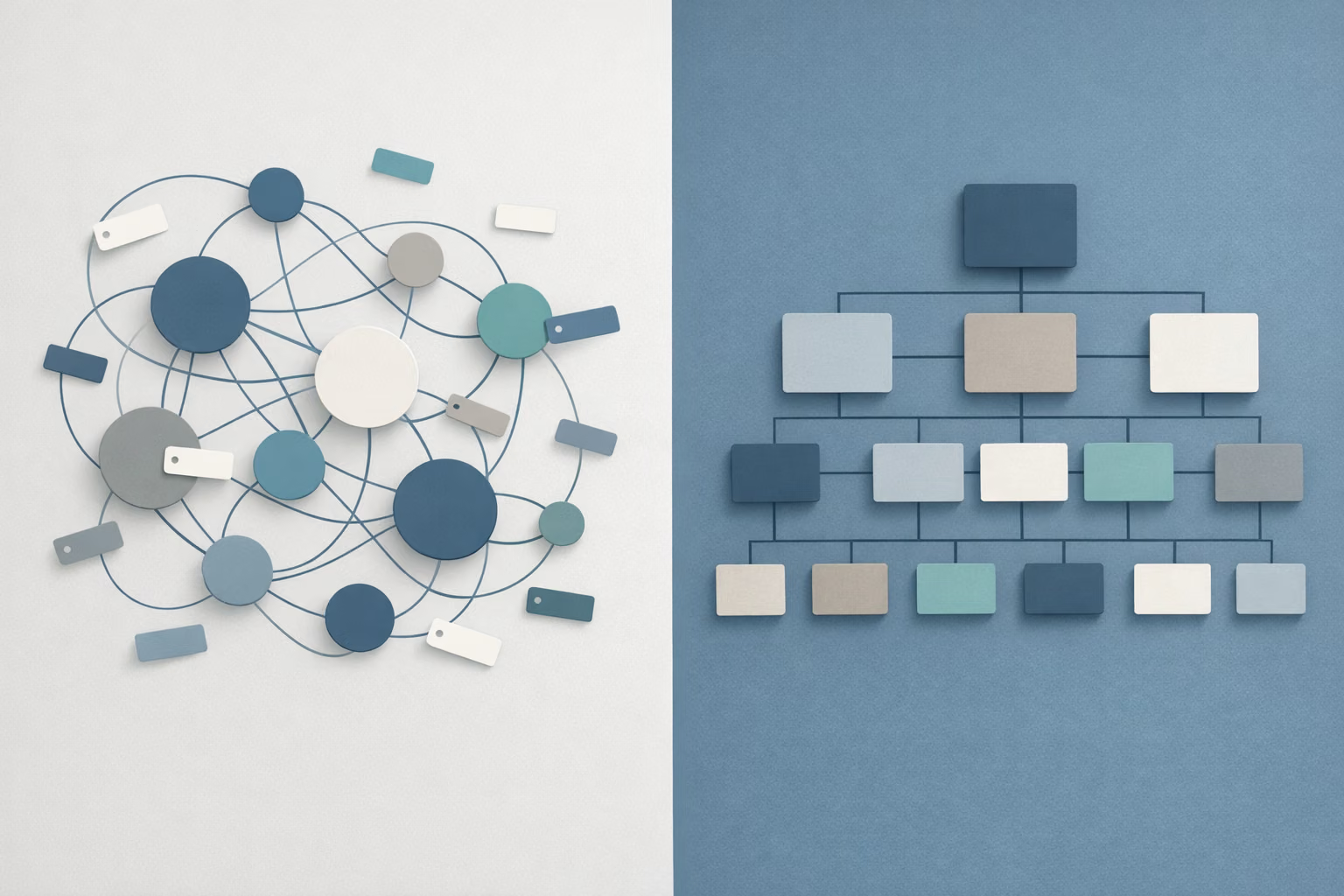

The problem emerges at the level of the repository, not the project. Dovetail’s flexibility is also its structural weakness. Because it does not impose a consistent architecture, every researcher or team that joins the workspace tends to build their own board logic. Tags multiply. Naming conventions drift. What starts as an organised system becomes an archive that requires institutional memory to navigate. After six to twelve months, the researchers who built the original structure are often the only people who can find anything in it. Everyone else sends the Slack message.

This is what might be called board bloat: not a failure of effort, but a predictable outcome of a tool that optimises for individual project quality without enforcing shared organisational logic. Dovetail did not cause this problem. But it also does not prevent it.

For a broader comparison that includes Aurelius and EnjoyHQ, see Dovetail vs Aurelius vs EnjoyHQ.

What Condens trades away, and why that trade is worth making

Condens is a less immediately impressive product. It has a steeper initial setup because it asks you to make decisions about structure before you begin, decisions that Dovetail lets you defer. For teams used to the freedom of Dovetail, the early experience of Condens can feel constraining.

That constraint is, in fact, the product.

By requiring a consistent architecture from the start, Condens creates a repository that a new team member can navigate without a guide. The taxonomy is not implicit or researcher-dependent; it is built into how the tool works. At scale, across teams, across time, this matters significantly more than it does in the first three months of use.

The feature that addresses the other half of the adoption problem is Condens’ Stakeholder Magazine. The consistent failure mode for research repositories is not that findings are missing. It is that they are unread. Reports get delivered, PDFs get sent, decks get presented, and then the work sits in a folder that product managers and executives do not open. Condens addresses this directly. Stakeholder Magazine turns findings into a format that non-researchers will actually consume: a curated, readable digest rather than a raw research output. It is not a cosmetic feature. It is a structural solution to the distribution problem that most repository tools leave entirely to the researcher to solve.

Solving distribution is as important as solving storage, because research that is not read is functionally equivalent to research that was never done.

Adoption is a design problem, not a willpower problem

The frame that most teams use when evaluating these tools is capability: which tool can store, tag, and surface research most effectively? This is the wrong frame. The better question is which tool your team will actually use consistently six months after implementation.

Adoption is a design problem, not a willpower problem. If a tool requires ongoing effort to maintain its structure, that effort will eventually stop. If a tool’s output format does not match how stakeholders consume information, those stakeholders will stop asking for it. The insights manager who chose the tool will spend an increasing proportion of their time either maintaining the system themselves or explaining why the system is not being used.

Dovetail is better for teams that have a dedicated research operations function, a clear internal taxonomy, and the ongoing capacity to maintain both. In that environment, its flexibility is an asset rather than a liability.

Condens is better for teams that need the repository to work without constant stewardship, where different researchers contribute at different times, stakeholders need to receive information in a form they will actually read, and the cost of a collapsing taxonomy is higher than the cost of an initially rigid structure.

Neither answer is universal. But the Slack message is a reliable indicator. If your team is still asking whether something has already been researched, the tool you chose is not working, regardless of how good its AI tagging is.

The gap that neither tool fills

Both Dovetail and Condens solve the same class of problem: organising what your team has already found. They differ significantly in how well they make that work accessible over time and across audiences. But they share a constraint. They are retrospective by design. They hold the outputs of research that was commissioned, run, and delivered.

Neither tool tells you what your team has not looked for yet.

Between research projects, markets move. Consumer language shifts. New frustrations emerge in forums and communities that no survey has asked about yet. By the time a new project is commissioned, scoped, and delivered, the gap between what the organisation knows and what it needs to know has already widened. Mimir is built for this layer, continuously monitoring unprompted conversation across forums, review platforms, and communities, so the signal that accumulates between your research projects does not go undetected. A repository without a feed is still a closed system.

If your repository is working but your research programme is still running behind the questions your market is asking, Mimir monitors the unprompted conversation your repository was never built to capture. Start for free.

Subscribe for news updates.

Embedding a user's question and searching for similar text sounds right. For formal documents like regulations, contracts, or technical standards, it systematically retrieves the wrong content. Here is the problem, and a technique that solves it.