How to build a moderator guide that keeps interviews on track

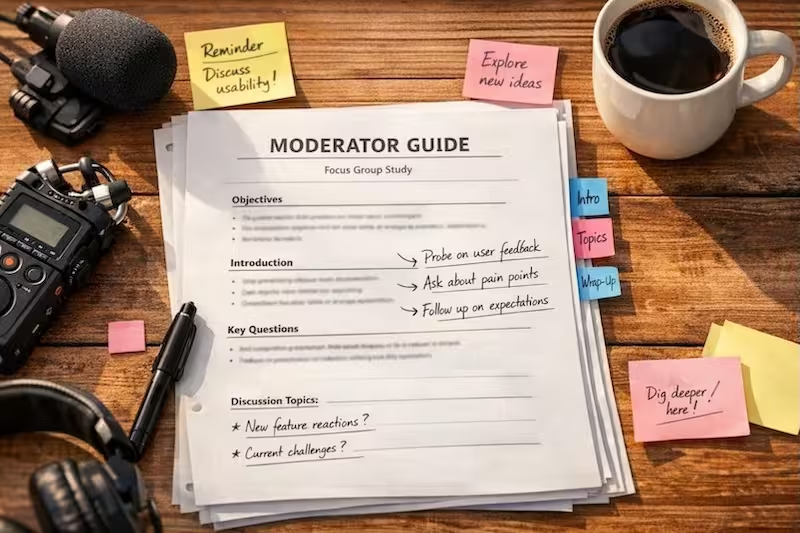

A good discussion guide tells you what to ask. A good moderator guide tells you how to ask it, what to listen for, and how to manage the conversation. Here is what goes into one and why it matters for consistent, high-quality interviews.

The document every moderator needs but few have

A discussion guide is a list of questions. It tells you what to ask, in roughly what order, and what topics to cover before the session ends.

A moderator guide is something else entirely. It tells you how to ask each question, what to listen for in the answer, when to probe and with what, and how to manage the conversation when it goes somewhere unexpected. It is the difference between knowing the route and knowing how to drive.

Most research projects start with a discussion guide and call it done. The moderator improvises the rest, drawing on experience, instinct, and whatever worked last time. For a seasoned moderator with a well-understood audience, that can work. For everyone else, it produces inconsistency, missed insights, and interviews that run over time because the moderator is inventing the facilitation as they go.

This article explains what a proper moderator guide includes, why it matters for research quality, and how to build one that works across interviewers and studies.

What a discussion guide misses

A typical discussion guide contains sections, questions, and maybe some rough timing. It assumes the moderator knows how to:

- Transition smoothly between topics without jarring the participant

- Probe effectively when an answer is shallow or vague

- Recognise when an answer contains something worth following

- Manage time without cutting off valuable threads

- Notice the non-verbal cues that signal discomfort, hesitation, or evasion

For an experienced moderator, these are second nature. For a junior researcher, a client employee filling in, or even an experienced moderator working in an unfamiliar culture or industry, they are not. The discussion guide leaves them to figure it out in real time, which is exactly when figuring it out is hardest.

The result is variability. Two moderators using the same discussion guide will run different interviews, probe different things, and come away with different findings. The data quality depends on who was in the room, which is not a foundation you can build a research programme on.

The anatomy of a moderator guide

A proper moderator guide adds four layers to the discussion guide: timing, probes, listening guidance, and facilitation notes.

Timing. Not just minutes per section, but minutes per question, with notes on which questions are flexible and which are essential. When time runs short, the moderator needs to know what to cut and what to protect. A timing column that says “core – protect” or “flex – can shorten if behind” makes those decisions possible in the moment.

Probes. Every question has natural follow-ups. Some are universal: “Tell me more about that”, “What did that look like in practice”, “Anything else?” Others are specific to the question type or topic. A rating scale question needs probes for midpoint selection (“What would make it higher or lower?”) and extreme selection (“That’s quite high – what makes you say that?”). A question about a process needs probes about steps, exceptions, and workarounds. Writing these into the guide means the moderator does not have to invent them mid-interview.

Listening guidance. The moderator needs to know what matters in the answer before they hear it. For a question about frequency, the listening guidance might be: “Do they estimate or give precise numbers? Do they qualify it (‘usually’ vs ‘always’)? Are there exceptions to the pattern?” For a question about satisfaction, the guidance might be: “Listen for unprompted complaints or praise. Note the strength of language (‘fine’ vs ‘love it’).” This turns the moderator from a passive question-asker into an active listener who knows what to look for.

Facilitation notes. These cover everything else: how to introduce the section, what to do if the participant gets emotional, how to handle a dominant voice in a group setting, when to show a stimulus, what to do if the participant asks for help during a task. They are the accumulated wisdom of experienced moderators, written down so every moderator can access it.

Generate your guide skeleton

Use this mini-tool to calculate your time-blocks based on your methodology and session length.

LIVE TOOL

Ethics/Consent

mostly fixedContext/Warm-up

Core questions

scales with timeWrap-up

For a more granular builder that includes question-by-question timing, probes, and Word document export, use our moderator guide generator.

Why the same guide works for different moderators

A well-built moderator guide does not just help inexperienced moderators catch up. It aligns experienced ones.

Two experienced moderators using the same discussion guide will still run different interviews because their individual instincts differ. One probes for emotional resonance; another probes for process detail. One picks up on contradictions; another follows the narrative thread. Both produce valuable data, but the data is not comparable. If the research goal is to aggregate findings across a programme of interviews, inconsistency is a problem.

A moderator guide that specifies probes and listening points creates consistency without rigidity. Both moderators still bring their experience and judgment. But they are probing the same things, listening for the same signals, and noticing the same patterns. The data becomes comparable across interviewers in a way that is not possible when each moderator relies on their own instincts.

This matters most in three situations: multi-moderator projects where findings need to be aggregated, longitudinal programmes where interviews happen over months or years, and client handoffs where the person who designed the research is not the person conducting it. Between those interview cycles, unprompted conversation keeps happening in forums, review platforms, and communities. Mimir monitors that signal continuously, so the next wave of interviews can be grounded in what has shifted since the last one.

The four question types and what they need

Not all questions need the same level of support. The moderator guide’s job is to give each question what it requires.

Open-ended questions need the most. They need universal probes (tell me more, what did that look like), topic-specific probes based on keywords in the question, and listening guidance that helps the moderator recognise what matters. An open-ended question about a challenge needs different listening guidance than one about a decision. The guide should make that distinction.

Rating and selection questions need probes for the edges. A single-select question where the participant answers quickly may need a probe about why it was easy. A multi-select question may need a probe about which selection matters most. A rating question needs probes for the extremes and the midpoint. These are not obvious to every moderator, but they are knowable in advance.

Activity and task questions need facilitation notes. The moderator needs to know when to hand over a stimulus, how long to let the task run, what to do if the participant gets stuck, and what to note during observation. These are not probes in the usual sense; they are instructions for managing a different mode of interaction.

Group dynamics add another layer

Focus groups and workshops introduce dynamics that one-to-one interviews do not. A moderator guide for a group setting needs additional elements.

Participation management. Quiet participants need to be drawn in. Dominant ones need to be managed. The guide should include language for both: “That’s helpful – I’d love to hear from others” for the dominator, and a direct check-in for the quiet participant. It should also note when to do a round-robin (everyone answers) versus when to let the conversation flow.

Non-verbal cues. In a group, non-verbal signals matter differently. Someone nodding vigorously while another speaks is data. Someone checking their phone is data. The guide should tell the moderator what to watch for and when to follow up on it.

Building on others’ ideas. The richest focus group data comes from interaction, not just sequential answers. The guide should include prompts that encourage building: “Does anyone see it differently?”, “Who else has experienced that?”, “What do others think?”

Why the tool does not use AI

The moderator guide generator at the end of this article is deliberately rule-based, not AI-generated. There is a reason for that.

A moderator guide is not creative writing. It is a structured document that applies consistent logic to predictable situations. The probes for a single-select question do not need to be invented fresh each time; they follow patterns. The listening guidance for a question about frequency does not depend on the specific topic; it depends on the question type. The facilitation notes for an activity question are the same whether the activity is using an app or assembling furniture.

Rule-based generation produces guides that are predictable, reliable, and consistent. AI generation produces guides that are novel, unpredictable, and inconsistent. For a tool where the whole point is to reduce variability, AI is the wrong solution.

The rules themselves come from research methodology literature and accumulated practice, not from a model guessing what might sound right. The timing allocations are based on question type and study length, not on an LLM’s estimate of how long something should take. The probes are drawn from a library of patterns that have worked across hundreds of interviews. The listening guidance reflects what experienced moderators have learned to notice.

This is not a philosophical position against AI. It is a practical one. For this use case, rules work better.

Building your own library

If you moderate regularly, you will eventually develop your own sense of what works. The probes that consistently produce rich answers. The listening points that predict whether an insight is real. The facilitation techniques that keep groups engaged.

The value of a structured moderator guide is that you do not have to remember all of it in the moment. You write it down once, refine it over time, and have it available whenever you need it. The guide becomes a repository of your accumulated learning, not just a list of questions for the next session.

Over time, you will find yourself needing the guide less because the patterns have become internalised. But the guide still matters because it ensures consistency across the team and because it captures what you learned for the next person who runs this kind of study.

The difference a good guide makes

A project run with a discussion guide only and a project run with a full moderator guide produce different results. The difference is visible in the data.

Interviews run with a moderator guide are more consistent across interviewers. Probes are used where they matter. Listening points are noticed and followed. Time is managed without cutting off valuable threads. The transcripts show depth in the places that matter and efficiency everywhere else.

Projects run without one show the opposite: variable depth, missed opportunities, interviews that run over or under, and findings that depend on who was in the room.

The guide does not make the moderator. Experience, judgment, and empathy still matter. But the guide makes it possible to deploy those qualities consistently, across sessions, across interviewers, across projects. It turns moderation from an individual craft into a repeatable practice.

The moderator guide generator builds these elements automatically from your discussion guide sections and questions. Enter your questions, select the types, and get a complete guide with timing, probes, listening points, and facilitation notes.

If you want to complement your interview programme with continuous signal from unprompted consumer conversation between research cycles, start for free.