Why your RAG retrieves the wrong chunks (and how HyDE fixes it)

Embedding a user's question and searching for similar text sounds right. For formal documents like regulations, contracts, or technical standards, it systematically retrieves the wrong content. Here is the problem, and a technique that solves it.

The retrieval problem nobody talks about

When you build a RAG pipeline over a corpus of formal documents, the standard approach feels intuitive: embed the user’s question, find chunks with similar embeddings, pass them to the model as context. The assumption underneath is that a question about a topic will embed close to text about that topic.

For conversational documents, blog posts, or news articles, this assumption mostly holds. For formal documents, it breaks in a specific and consistent way.

Take a regulatory text like DORA, the EU’s Digital Operational Resilience Act. A user asks: “What are the ICT risk management obligations for banks?” Now consider what the regulation actually contains. There are two kinds of text: recitals and obligation articles.

Recitals are the introductory paragraphs of a regulation that explain its purpose and context. They are written in flowing, explanatory prose. One might read:

Financial entities should implement robust ICT risk management frameworks that ensure the resilience of their digital operations and protect against ICT-related disruptions…

Obligation articles are what the regulation actually requires. They are terse and imperative:

Article 6(1). Financial entities shall have a sound, comprehensive and well-documented ICT risk management framework…

The recital text is dense with terminology that matches the query: ICT risk management, financial entities, frameworks. The obligation article is compressed and precise. When the vector search scores chunks by similarity to the query, the recital prose consistently wins over the obligation articles, even though the obligation articles are the legally material content the user actually needs.

This is not a bug in the embedding model. It is a structural mismatch between how users ask questions and how formal documents are written. The user asks in plain language; the recitals are written in explanatory prose; the obligations are written in compressed legal syntax. The embedding model does its job correctly. The retrieval result is still wrong.

The naive fix and why it does not scale

The obvious response is to expand the query. Append keywords from the domain, inject terminology that obligation articles use, bridge the vocabulary gap manually.

This works. For exactly one document.

When we hit this problem in our own pipeline, the first fix was a function that detected DORA-related queries and appended a list of obligation-article keywords before embedding. The retrieval improved immediately. Then we added a second regulation to the corpus. Then a third. Each new large, formally structured document would eventually suffer the same mismatch, and each would need its own hardcoded expansion rule. The maintenance cost grows linearly with the corpus. Worse, the rules need to be written by someone who has read the document carefully enough to know what vocabulary its obligation articles use. The whole point of a RAG system is to avoid that.

What HyDE does

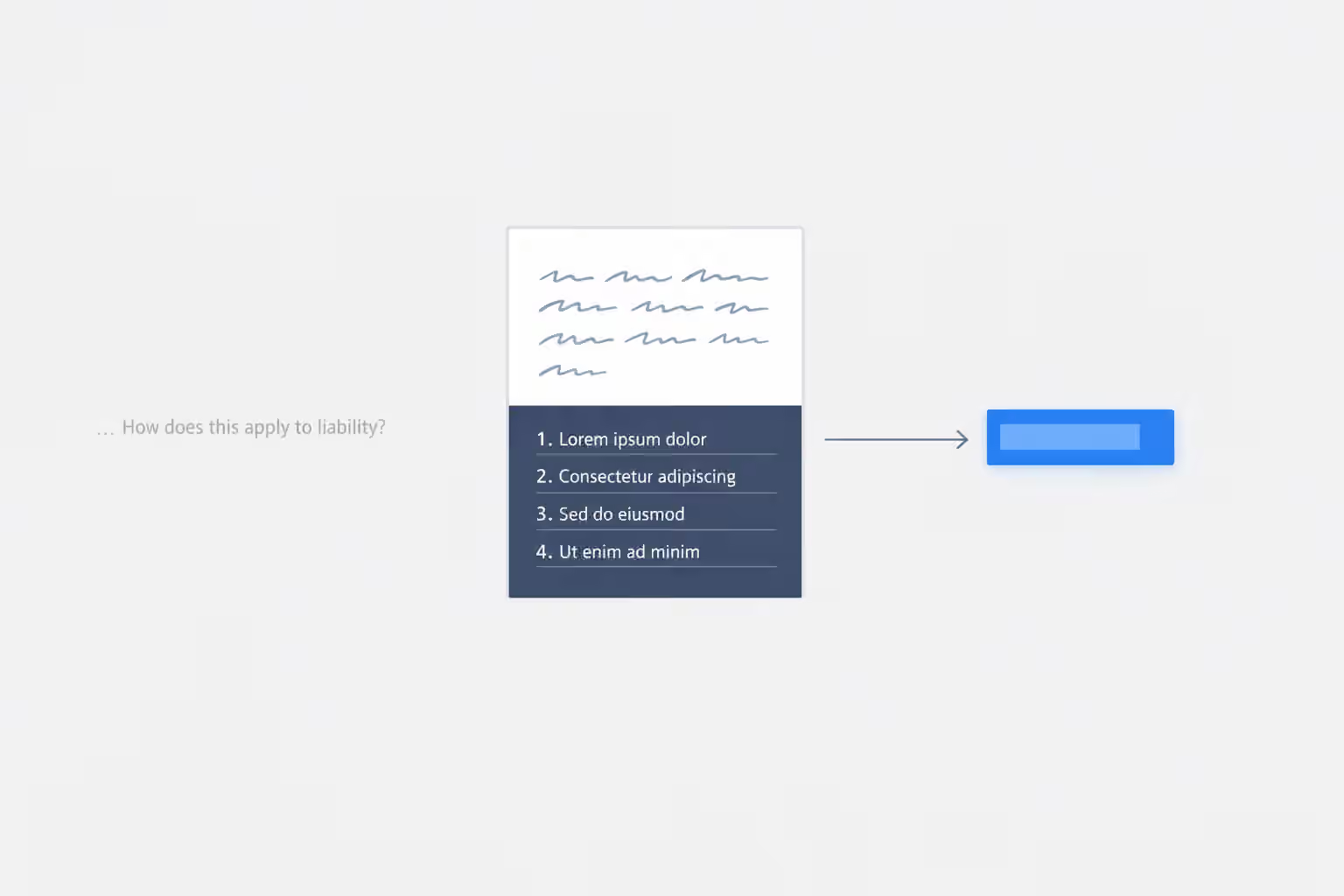

Hypothetical Document Embedding (HyDE) was introduced by Gao et al. in 2022. The insight is straightforward: instead of embedding the user’s question and searching for similar chunks, ask the LLM to write a short hypothetical answer to the question first, then embed that hypothetical answer and use it as the search vector.

The key is that a hypothetical answer to a regulatory question naturally reads like a regulatory answer. If the question is “What are the ICT risk management obligations for banks under DORA?”, a well-prompted LLM generates something like:

Under Article 6 of DORA, financial entities shall maintain a sound, comprehensive and well-documented ICT risk management framework. The management body must define, approve and oversee the implementation of this framework. Financial entities shall identify, classify and document all ICT-supported business functions and map their ICT assets accordingly…

That hypothetical answer embeds very close to actual obligation articles. The semantic mismatch problem disappears, not because we manually bridged the gap, but because the LLM generated text in the style of the documents we are searching.

No regulation-specific code. No handcrafted keyword lists. The same approach works across every formal document in the corpus automatically.

The implementation

The HyDE step runs before retrieval and produces a search vector. It is never shown to the user.

private async generateHypotheticalAnswer(question: string): Promise<string> {

const result = await this.aiService.prompt(

`You are an expert in EU financial regulation. Generate a concise hypothetical answer to the following regulatory question.

Write it as a factual compliance document — use specific article references, legal obligation language, and precise regulatory terminology. Do not hedge or caveat. Write as if you are certain of the answer.

This answer will be used only for semantic search — it will not be shown to the user.

Question: ${question}`,

{

taskType: 'hyde-generation',

cacheSystemPrompt: false,

},

);

return result.response.content;

}The task profile for hyde-generation uses temperature 0.3 rather than 0. Some variation in the hypothetical answer is acceptable and may help retrieval by slightly broadening the search vector. The hypothetical does not need to be accurate; it needs to be stylistically similar to the documents being searched.

The embedding of the hypothetical answer then replaces the embedding of the raw query in the vector search:

// Before HyDE: embed the raw question

const queryVector = await embeddingService.embedQuery(question);

// After HyDE: embed a hypothetical answer

const hypothetical = await generateHypotheticalAnswer(question);

const queryVector = await embeddingService.embedQuery(hypothetical);

// Retrieval is otherwise unchanged

const chunks = await vectorStore.similaritySearch(queryVector, { k: 20 });The rest of the pipeline, retrieval, context construction, inference, is unchanged.

What the results showed

We run a fixed set of five queries against the same test corpus and user profile across every iteration. The most affected query was the DORA ICT risk management question described above.

Before any improvement, with fixed-word chunking, the query retrieved one chunk, triggered a false gap detection alert, and produced an answer that hedged heavily. The system had the right document. It could not find the right chunks.

After article-level chunking and diversity-aware retrieval (distributing retrieval slots across documents rather than letting one document monopolise them), the result improved significantly: six chunks retrieved, no false gap, a detailed answer with specific article citations. That improvement came from better chunking, not from HyDE. But it required a hardcoded query expansion function specific to DORA.

After HyDE, the hardcoded function was removed entirely. The result was essentially identical: five chunks retrieved, no gap, a detailed cited answer. The score on the five test queries did not change.

That last point deserves emphasis. The measured quality on those five queries stayed the same. The architectural value of HyDE is not visible in those numbers. Before HyDE, any new large regulation added to the corpus would eventually produce the same recital-crowding problem, and someone would need to write a new expansion rule for it. After HyDE, that problem is solved automatically for every regulation, regardless of size, structure, or vocabulary. The improvement is in the system’s ability to scale, not in the test scores.

| Query | Baseline 1 (fixed-word) | Baseline 2 (article chunks + expansion) | Baseline 3 (HyDE) |

|---|---|---|---|

| DORA ICT obligations | Poor, false gap | Good | Good |

| IFRS 18 reporting | Good | Partial (corpus gap) | Partial (corpus gap) |

| SIPS oversight | Good | Good | Good |

| NSFR / securities financing | Good | Good | Good |

| AML customer due diligence | Good | Good | Good |

The IFRS 18 partial result is a corpus gap, not a retrieval failure. The core standard text is not ingested, only the EU adoption regulation. HyDE cannot retrieve documents that are not in the corpus.

When HyDE helps, and when it does not

HyDE is most useful when there is a consistent semantic gap between how users phrase questions and how the source documents are written. Regulatory text, legal contracts, medical literature, technical standards, and academic papers all share this property: the authoritative, obligation-bearing content is written in compressed formal language that does not resemble natural language queries.

It is less useful when users and documents share vocabulary naturally. A corpus of blog posts, customer feedback, or news articles does not have this mismatch. Standard query embedding retrieves well without the extra step.

There is also a meaningful limitation: HyDE depends on the LLM having enough domain knowledge to write a stylistically appropriate hypothetical answer. For well-documented, widely discussed domains like EU financial regulation, the LLM can produce dense, accurate-sounding regulatory language without difficulty. For highly specialised or very recent domains where the LLM’s training coverage is thin, the hypothetical answer may be underspecified or stylistically off, which could hurt rather than help retrieval. In those cases, the hardcoded expansion approach may be more reliable, even if it does not scale as well.

The cost

HyDE adds one LLM call per query before retrieval starts. With a 400-token ceiling on the hypothetical answer, this adds approximately 500ms to 1 second of latency in production conditions. The cost of that call is negligible relative to the downstream inference call, which processes the retrieved chunks, the user profile, and the full question together.

If latency is a concern, the HyDE call can be parallelised with a preliminary chunk count check, or the hypothetical answer can be cached per question hash. Neither optimisation has been necessary in practice.

Where this fits in a broader RAG architecture

HyDE solves one specific problem: the semantic mismatch between query vectors and formal document vectors. It does not address chunking strategy, retrieval diversity, noise filtering, gap detection, or output auditability. Those are separate concerns that require their own solutions.

The chunking strategy matters as much as the retrieval approach. In our case, switching from fixed-word chunks to article-boundary splits before introducing HyDE produced an equally large quality improvement. The two changes address different failure modes: poor chunking fragments the content you need to retrieve; poor query embedding ensures you search for the wrong thing even if the content is chunked correctly.

For a detailed account of the broader architecture that this retrieval step sits within, including the audit trail, zero-temperature inference, and cryptographic output hashing that make research outputs defensible and traceable, see Why deterministic RAG beats generative AI for research.